Machine learning algorithms are cool because the can handle continuous and categorical variables and have few assumptions about data distribution. As the name machine learning would imply, it’s a highly automated process. Sometimes they take a while to compute, but the computer can be unsupervised during that time.

One machine learning algorithm I use in my work is Random forest. This is an ensemble method that creates many trees instead of a single decision tree. The numerous trees are made from different subsets of your whole data set, which reduces the risk of overfitting.

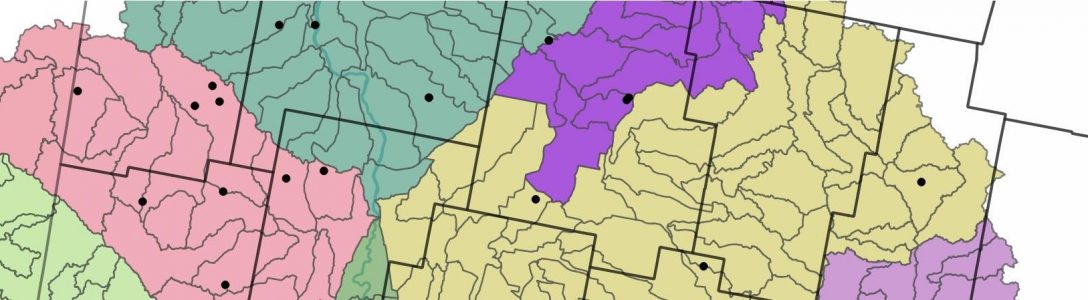

Random Forest can be used to predict everything from yield to crime. It’s particularly useful when you have lots of potential independent variables and want an idea of which variables have the biggest impact on your output. Another use for random forest is in interpolating soil test results or other spatial point data. It can handle multicollinear data (data where lots of your input variables are correlated with each other) which is frequently the case with properties like CEC and potassium or slope and elevation.

Instead of explaining this in more depth, I’ll direct you to this Algobeans article. The graphics are truly excellent and I found the description and examples very clear.